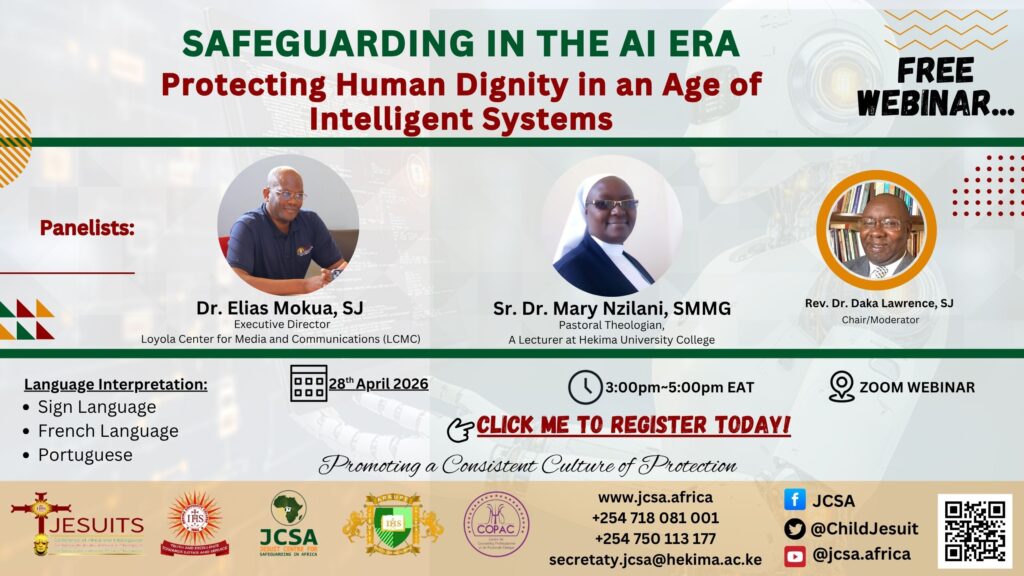

The rapid advancement of artificial intelligence (AI) is reshaping how societies communicate, make decisions, and relate to one another. In a recent webinar on Safeguarding in the AI Era: Protecting Human Dignity in an Age of Intelligent Systems, Dr. Elias Mokua, SJ, examined this transformation through a safeguarding lens, emphasising the ethical, pastoral, and practical responsibilities that arise in a digital age. His central concern was clear: while AI offers immense potential, it also presents significant risks to human dignity, particularly for vulnerable individuals and communities.

At its core, AI represents a form of intelligence external to the human person. Unlike natural, God-given intelligence, artificial intelligence is designed to augment human capabilities, processing data, identifying patterns, and making predictions at unprecedented speed and scale. However, this very power introduces a tension: technologies created to serve humanity can, if left unchecked, undermine the very dignity they are meant to enhance.

Dr. Mokua framed safeguarding within this evolving context as both an enduring moral obligation and a newly complex challenge. Traditionally, safeguarding has focused on protecting minors and vulnerable adults from physical, emotional, and psychological harm. In the AI era, this responsibility expands into digital spaces where harm can be less visible but equally damaging. Online exploitation, data manipulation, misinformation, and algorithmic bias are no longer abstract risks; they are concrete realities shaping lived experiences.

One of the key concerns highlighted in the webinar is the issue of data. AI systems depend heavily on vast quantities of data, much of which includes personal and sensitive information. When improperly managed, such data can be exploited, leading to violations of privacy and autonomy. For safeguarding practitioners, this raises urgent questions: Who controls this data? How is it used? And whose interests does it ultimately serve? Without clear ethical frameworks, there is a danger that individuals, especially the vulnerable, will be reduced to data points rather than persons with inherent dignity.

Closely related is the problem of bias embedded within AI systems. Algorithms are not neutral; they reflect the assumptions, values, and limitations of those who design them. If these systems are trained on biased data, they can perpetuate discrimination and exclusion. In safeguarding contexts, this could mean overlooking those most at risk or misidentifying threats based on flawed patterns. Dr. Mokua stressed the importance of critical engagement with AI tools, urging institutions to question not only how these systems function but also whose voices and experiences they represent.

Another dimension of concern is the spread of misinformation and manipulated content. AI technologies can generate highly convincing text, images, and videos, blurring the line between truth and fabrication. This has profound implications for safeguarding, as false narratives can damage reputations, incite harm, or obscure real cases of abuse. The capacity to create deepfakes or automated propaganda challenges traditional methods of verification and calls for a renewed commitment to truth in communication.

Dr. Mokua also highlighted the psychological and relational impacts of AI. As people increasingly interact with machines, through chatbots, virtual assistants, and automated systems, there is a risk of diminishing authentic human connection. For safeguarding, which often relies on trust, empathy, and attentive presence, this shift is significant. Technology should not replace human relationships but rather support them. Maintaining this balance requires intentionality and discernment.

Importantly, the webinar did not present AI as inherently harmful. On the contrary, Dr. Mokua acknowledged its potential as a tool for good. AI can assist in identifying patterns of abuse, supporting reporting mechanisms, and enhancing training and awareness. It can help organisations respond more efficiently and allocate resources where they are most needed. However, these benefits depend on responsible use grounded in ethical principles.

To navigate this landscape, Dr. Mokua proposed several guiding considerations. First is the primacy of human dignity. Every technological decision must be evaluated in light of its impact on the human person, particularly the most vulnerable. Efficiency and innovation cannot come at the expense of respect, justice, and care.

Second is accountability. Institutions must take responsibility for the tools they adopt and the systems they implement. This includes establishing clear policies, ensuring transparency, and creating oversight mechanisms. Safeguarding in the AI era requires not only technical competence but also moral clarity.

Third is education and formation. Those involved in safeguarding, whether in pastoral, educational, or organisational roles, need to understand the basics of AI and its implications. This is not about becoming technical experts but about developing informed awareness. Such knowledge enables more effective decision-making and fosters a culture of vigilance.

Fourth is collaboration. The challenges posed by AI are too complex to be addressed in isolation. They require dialogue across disciplines, including technology, ethics, theology, law, and social sciences. By working together, stakeholders can develop more holistic and context-sensitive approaches to safeguarding.

Finally, Dr. Mokua emphasised the importance of a values-driven approach to technology. AI should be guided not only by what is possible but by what is right. This involves ongoing reflection, discernment, and commitment to the common good. In a world increasingly shaped by intelligent systems, safeguarding becomes a shared responsibility that extends beyond traditional boundaries.

In conclusion, safeguarding in the AI era demands both continuity and change. The fundamental commitment to protecting human dignity remains unchanged, but the context in which it is lived out has evolved significantly. As AI continues to influence every aspect of life, the task is not to resist technological progress but to shape it responsibly. By placing the human person at the centre, fostering accountability, and embracing ethical discernment, institutions can ensure that AI serves as a tool for protection rather than harm.